When the IBM Personal Computer roamed the Earth, the CGA card offered a resolution of 320 by 200 pixels, among others. Later, VGA offered higher resolutions, but the 256-color mode was limited to that resolution. This mode was used for the famous computer game DOOM.

I had thought that the 256-color mode on VGA used 320 by 240, but because the CGA card was intended to display 25 lines of text rather than 24, IBM used eight scan lines per character rather than ten to allow this.

On the other hand, 16-color mode allowed higher resolutions, such as 640 by 480, which was the typical resolution (and also the lowest supported resolution) used with Windows 3.1; even EGA offered 640 by 480 with 16 colors.

Later versions of Windows required a minimum resolution of 800 by 600 to be available.

All these resolutions are for the 4:3 aspect ratio, with square pixels.

Later on, as available monitor resolutions increased, other resolutions became available, following a mathematical pattern:

2048 x 1536 (4:3 x 512) 1920 x 1440 (4:3 x 480) 1600 x 1200 (4:3 x 400) 1280 x 960 (4:3 x 320) 1152 x 864 (4:3 x 288) 1024 x 768 (4:3 x 256) 800 x 600 (4:3 x 200) 640 x 480 (4:3 x 160)

And there were also some resolutions that attempted to offer a widescreen experience without quite attaining the 16:9 aspect ratio of HDTV:

2560 x 1600 (8:5 x 320) 1920 x 1200 (8:5 x 240) 1680 x 1050 (8:5 x 210) 1440 x 900 (8:5 x 180) 1280 x 800 (8:5 x 160)

A few monitors with this aspect ratio are still being made; they are chiefly aimed at professionals who work with color photographs for publication.

Of course, this aspect ratio, of 1.6 to 1, could be said to have an aesthetic advantage, given that the Golden Ratio is 1.618... or

____

/

1 + / 5

\/

-------------

2

...half of one plus the square root of five.

Because the continued fraction of the Golden Ratio is

1

1 + ---------

1

1 + ---

1 + ...

it holds the distinction of the most difficult irrational number to approximate well by a fraction. There will be no anomalously good rational approximations to it, the way that 3 16/113 is for pi. However, even so, one can get closer by going further along in the Fibonacci sequence; thus, 34/21 is 1.619..., so a 34:21 aspect ratio would be a very close approximation to the Golden Ratio for practical purposes.

Alas, however, no one makes a monitor with, say, a 2040 by 1260 resolution, or one with a 1632 by 1008 resolution.

As well, there were some screens having the slightly wider 5:3 aspect ratio as well:

1920 x 1152 (5:3 x 384) 1280 x 768 (5:3 x 256)

Another aspect ratio supported by video cards is 25:16, as shown with this resolution:

1600 x 1024 (25:16 x 64)

And there are also some that, for whatever reason, are only ever so slightly under the 16:9 aspect ratio:

1768 x 992 (147:83 x 12) 1176 x 664 (147:83 x 8)

with an aspect ratio of 1.771... instead of 1.7777... .

In this group, but with slightly different aspect ratios, are also these resolutions:

1366 x 768 (683:384 x 2) 1360 x 768 (85:48 x 16)

On the other hand, presumably intended for specialized applications, is also at least one screen resolution with an aspect ratio that is even less elongated than 4:3, as shown here:

1280 x 1024 (5:4 x 256)

Indeed, I've found that the monitors with this aspect ratio that one local computer store offers are especially designed for point-of-sale applications.

HDTV originally offered two resolutions that offered greater definition than that of conventional analog television, as well as changing the aspect ratio to 16:9.

These two resolutions were 1080i and 720p, which offered 1920 by 1080 pixels and 1280 by 720 pixels respectively. The letter suffixed after the vertical resolution indicates that while progressive scan was possible with 720 lines, images with 1080 lines had to be interlaced.

Today, computer monitors tend to have the 16:9 aspect ratio instead of the 4:3 aspect ratio.

And, as I write this, a shortage of new-generation graphics cards is beginning to come to an end.

The new generation of graphics cards offer exciting new features, but the story begins with the previous generation.

On August 20, 2018, Nvidia announced the GeForce 20 series of video cards, built on the Turing architecture, with the GeForce RTX 2080 being the first of them to ship one month later.

These cards offered hardware support for real-time ray-traced graphics in video games.

When computers attempt to render images of three-dimensional scenes, there are a number of different algorithms to choose from. One can begin with a wire-frame drawing of the objects to be depicted, and then give them a little bit more solidity by eliminating hidden lines.

The next step is to fill in the surface polygons between the lines bounding the objects to be depicted with solid colors.

These techniques were used on 8-bit computers for simple 3-D games, although usually 2-D graphics was used instead, because even with the simplest of techniques, 3-D graphics in real-time was really too demanding for an 8-bit computer.

But as hardware advanced, additional techniques, originally developed by academic researchers on expensive high-performance computers, began to be used.

Texture mapping used images, possibly repeating, instead of solid colors to fill the surfaces of objects.

Techniques like Phong shading allowed curved surfaces and their illumination to be depicted in a realistic-looking manner.

The ideal, however, would be ray-tracing, where the actual path of light from the light source to the viewer was accurately calculated.

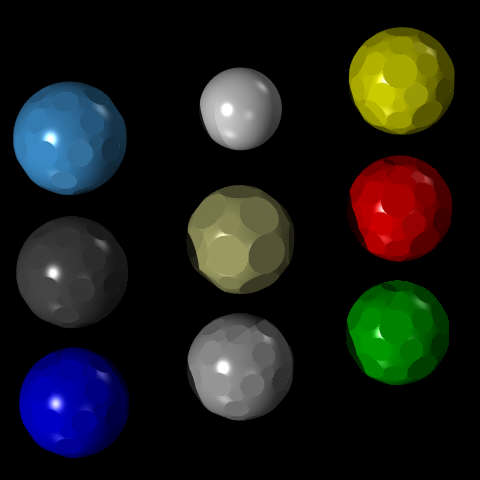

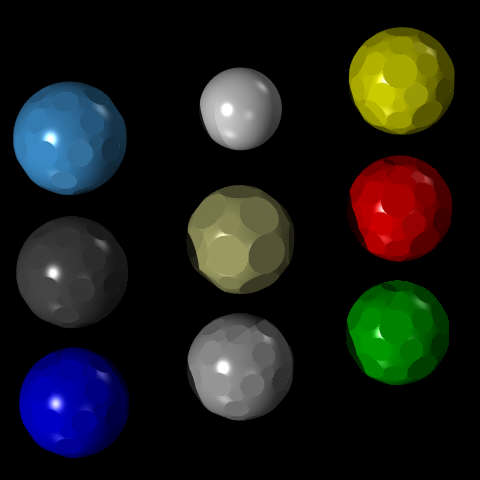

The open-source program POV-Ray (Persistence of Vision Ray Tracing Program) offered people a way to produce computer-generated still images on their computers.

I used it to produce this image:

from a page about Archimedian solids and molecular models.

This is not a particularly impressive image; I can't claim it shows off all that much of what ray-tracing has to offer.

As ray-tracing requires a lot of calculation, it is slow. The new Nvidia graphics cards, and the driver software for them, made real-time ray-tracing feasible by using a number of techniques to economize on calculation by not tracing every ray, but using approximations instead.

To go still further in addressing the challenges presented by ray tracing, Nvidia also introduced DLSS. Many 4K television sets and many Blu-Ray players include an upscaling feature, so that one can watch a movie from a DVD on a high-resolution TV set with missing detail estimated based on algorithms trained on a wide variety of photographs of actual scenes; DLSS brought this kind of technology to video games, allowing a video game to be, in effect, played at a lower resolution than the one at which it is viewed.

Unlike enhancing a movie on a TV set, this was called from within the game, as things like controls and menus would not be processed by it.

Even with these measures, and the direct hardware support on Graphics Processing Units (GPUs) with billions of transistors, it took considerably longer to render an image with ray-tracing than it did rendering one by means of the techniques previously used, which at this point had been refined to a level producing images of acceptable quality for playing 3-D games.

Thus, the new Nvidia cards were controversial at the time of their release. Many of the people who were in the market for fancy graphics cards were serious competitive gamers, for whom the highest possible frame rates, not the best-looking graphics, were of paramount concern.

And, of course, when ray-tracing was a new feature, only a limited number of games supported it.

And so people thinking about getting a new graphics card had a difficult decision to make: pay extra for a feature which mostly belonged to the future, or get a card which offered more performance now for less, but which risked being left behind if ray tracing became the norm.

On September 1st, 2020, Jensen Huang welcomed the world to his kitchen, where he presented the new video cards his company had "cooked up", featuring the Ampere graphics architecure. These offered sufficient increased performance to allow games with ray-tracing enabled to be played at frame rates comparable to what had been possible with earlier graphics cards for conventional rendering.

The models announced at that time were:

Video CUDA Memory bus

Memory cores width

RTX 3070 8 GB GDDR6 5888 256 bit

RTX 3080 10 GB GDDR6x 8704 320 bit

RTX 3090 24 GB GDDR6x 10496 384 bit

The use of GDDR6x memory instead of GDDR6 memory effectively doubles the width of the memory bus, as this memory uses four signal levels to transmit two bits at once down each line.

Subsequent models added later were:

RTX 3060 12 GB GDDR6 3584 192 bit RTX 3060 Ti 8 GB GDDR6 4864 256 bit RTX 3070 Ti 8 GB GDDR6x 6144 256 bit RTX 3080 Ti 12 GB GDDR6x 10240 384 bit

The GDDR6x memory on the higher-end models doubled memory bandwidth for the same frequency by using four signal levels.

The 3080 was presented as the "flagship" model, with the 3090 as the "enthusiast" model; the 3090 was even capable of gaming at the reasonable frame rate of 60 FPS even at an 8K resolution.

As well, an improved 2.0 version of DLSS was announced at that time.

Shortly after, AMD announced their new line-up of video cards. These did include hardware support for ray tracing as well, and an upscaling feature, Fidelity FX Super Resolution, not based on sophisticated AI techniques like those used by Nvidia, was also announced as forthcoming; it has since become available.

Their lineup of cards was:

Video Memory bus Compute units Cache

Memory width Ray accelerators

RX 6700 XT 12 GB 192 bit 40 40 96 MB

RX 6800 16 GB 256 bit 60 60 128 MB

RX 6800 XT 16 GB 256 bit 72 72 128 MB

RX 6900 XT 16 GB 256 bit 80 80 128 MB

Thus, the distinction between the two companies is still present, as Nvidia is placing more emphasis on the new technology of ray-tracing than AMD, but AMD is not taking the chance of being left behind by completely neglecting that field.

Recently, Nvidia added a new mode option to its DLSS 2.0 in order to permit faster gameplay at the very high resolution of 8K.

Now that this background information is out of the way, here is a little table of 16:9 graphics resolutions commonly used today on home computers, but with some others added in that may be merely hypothetical at present, however much they seemed to me to fit in mathematically to the sequence:

"8K" 7680 x 4320 (16:9 x 480) *

6912 x 3888 (16:9 x 432)

6400 x 3600 (16:9 x 400)

6144 x 3456 (16:9 x 384)

5760 x 3240 (16:9 x 360)

5120 x 2880 (16:9 x 320)

4800 x 2700 (16:9 x 300)

4608 x 2592 (16:9 x 288)

4096 x 2304 (16:9 x 256)

"4K" 3840 x 2160 (16:9 x 240) *

3456 x 1944 (16:9 x 216)

3200 x 1800 (16:9 x 200)

3072 x 1728 (16:9 x 192)

2880 x 1620 (16:9 x 180)

2560 x 1440 (16:9 x 160) *

2400 x 1350 (16:9 x 150)

2304 x 1296 (16:9 x 144)

2048 x 1152 (16:9 x 128)

(2K?) 1920 x 1080 (16:9 x 120) *

1728 x 972 (16:9 x 108)

1600 x 900 (16:9 x 100) *

1536 x 864 (16:9 x 96)

1440 x 810 (16:9 x 90)

1280 x 720 (16:9 x 80) *

1200 x 675 (16:9 x 75)

1152 x 648 (16:9 x 72)

1024 x 576 (16:9 x 64)

(1K?) 960 x 540 (16:9 x 60)

Asterisks mark the resolutions I've actually seen in the wild; so at least one of my fanciful additions, 1600 by 900 is indeed real.

The presets used in DLSS and FSR currently are:

DLSS FSR

Ultra Quality 1.3x

Quality 1.5x Quality 1.5x

Balanced 1.72x Balanced 1.7x

Performance 2x Performance 2x

Ultra Performance 3x

Now, 1.5x, 2x, and 3x are all simple to understand.

1.75x would infer seven pixels from four, and 1.66x would infer five pixels from three. If you infer twelve pixels from seven, that would be 1.7142857... times, so I would have been inclined to think this is what is being done at both "Balanced" settings, but this is not in line with how these features are claimed to work by the respective manufacturers associated with them.

Given that the most common resolutions listed above are all multiples of 40 in every direction, to ensure that the source resolution consists of an integral number of pixels in each direction as well, ratios such as 40:31 for Ultra Quality, and 40:23 (for Nvidia) or 80:47 (for AMD) for Balanced would seem to be called for.

Of course, I had formed this large list of possible resolutions to see if I could find pairs of established resolutions that approximated the factors associated with the less obvious scale factors...

128 144 150 160 180 192 200 216 240 256 288 300 320 360 384 400

216 1.11 1.19 1.33 1.39 1.48 1.66 1.78 1.85

200 1.08 1.2 1.28 1.44 1.5 1.6 1.8 1.92 2

192 1.04 1.12 1.25 1.33 1.5 1.56 1.67 1.87 2

180 1.07 1.11 1.2 1.33 1.42 1.6 1.67 1.78 2

160 1.12 1.2 1.25 1.35 1.5 1.6 1.8 1.87 2

150 1.07 1.2 1.28 1.33 1.44 1.6 1.71 1.92 2

144 1.04 1.11 1.25 1.33 1.39 1.5 1.67 1.78 2

128 1.12 1.17 1.25 1.41 1.5 1.56 1.69 1.87 2 3

120 1.06 1.2 1.25 1.33 1.5 1.6 1.67 1.8 2 3

but the only approach to 1.3 is 1.33 (4 to 3) and at first the only approach to 1.7 or 1.72 was 1.78 (16 to 9). And interestingly enough, these two ratios are also the aspect ratios we've been talking about.

Adding 75, 150, and 300 times 16:9 to the resolutions, however, does give, finally, an approach to the Balanced ratio: 128 to 75.

However, there is no need to guess. AMD has, on this page, given precise details on what their Balanced mode is doing:

Resolution on Source input

the screen resolution

(Fidelity FX (Deep Learning

Super Resolution) Super Sampling)

1920 x 1080 1129 x 635 1113 x 626

2560 x 1440 1506 x 847 1484 x 835

3440 x 1440 2024 x 847

3840 x 2160 2259 x 1270 2227 x 1252

If you multiply 1129 by two, you get 2258, not 2259. So it is immediately obvious we are not dealing with an absolutely fixed ratio.

From one source, I have found a claim that the source vertical resolution for the Balanced mode of DLSS where the target vertical resolution is 2160 is positively known (rather than calculated) to be 1252 instead of 1270, and I have subsequently found some additional information elsewhere, although I can't be sure if the input resolutions given are positively known, or only calculated in those cases. However, I did find three sources for the Nvidia source resolutions; they only differ in that the source resolution for 1920 x 1080 is given either as 1114x626 or 1113x626.

2160/1270 is 216/127, or 1.7007874..., and 2160/1252 is 540/313, or 1.7252396... and so, either way, there appear to be no simple ratios used.

Of course, if one feels the need for a "Balanced" mode that lies between Quality at 1.5x and Performance at 2x, obviously, if there is no need for a simple integer ratio, one would choose the harmonic mean. 1.5 times the square root of 4/3 happens to be 1.7320508... which is close enough to the figures chosen by both Nvidia and AMD. Why they both avoided the precise figure, though, remains a mystery; as well, even if a simple ratio is no necessity for upscaling, I would still have thought that one would be helpful - and 1.75 is relatively close at hand, while 40:23 upscaling (a factor of 1.7391304347826..., so it could be advertised as 1.74, not too far from AMD's 1.7 and Nvidia's 1.72, although only slightly closer than the even simpler 1.75) would consistently fit most popular resolutions:

Screen 40:23 1600 x 900 920 x 517.5 1920 x 1080 1104 x 621 2560 x 1440 1472 x 828 3200 x 1800 1840 x 1035 3840 x 2160 2208 x 1242

well, perhaps not all of them, although 1600 by 900, despite existing, does not seem to have been one considered.

Speaking of video upscaling, sometimes it seems that people watching the competition between Nvidia and AMD forget that upscaling is used in some DVD and Blu-Ray players, as well as television sets.

A web search led me to a news item from August 2008 which cited the Regza ZF television from Toshiba as the world's first TV set with upscaling. I am not sure when other TV makers first came out with their upscaling features, but I have found references to SONY's X-Reality Pro upscaling which date back at least to 2012.

This significantly precedes DLSS 1.0, which was announced by Nvidia in 2018, and shipped the following year.

Also, it is worth mentioning that, while 4K television sets and computer monitors are standardized at the 3840 x 2160 resolution and the 16:9 aspect ratio, movie theatres use the 2.35:1 and 1.85:1 aspect ratios, and as movies are now often distributed digitally rather than on 35mm film, there are standards for this as well; the Digital Cinema Initiatives (DCI) Digital Cinema System Specification defines 4K standards for these aspect ratios of 4096 x 1716 and 3996 x 2160 respectively. A "full frame" option, using the largest dimension from each of these, of 4096 x 2160, also exists. This last option has an aspect ratio of 256:135.

I have not seen monitors with that resolution advertised, but you can buy monitors with a 5120 x 2160 resolution, for an aspect ratio of 64:27; (this is 2.37, so it is in the CinemaScope ballpark) of course, at present, a monitor with such a high resolution is expensive.

I just took another look; one is now available for as little (relatively speaking; one other model was $2,000) as $1,300 here in Canada, and that model is available for $1,000 in the United States.

So perhaps producing major theatrical movies on your home computer is starting to become affordable, although, of course, many other elements would be required as well.

There is at least one other resolution with this aspect ratio as well:

2560 x 1080 (64:27 x 40) 5120 x 2160 (64:27 x 80)

and several monitors with these resolutions are referred to as Ultrawide.

Not considered here are double-wide aspect ratios, which also exist; not only 32:9, which is twice as wide as 16:9, but also 16:5, which is twice as wide as 8:5. As well, other extra-wide aspect ratios exist that aren't quite so wide, such as 12:5 and 43:18.

Quite a few monitors are made with a 43:18 aspect ratio, usually with a resolution of 3440 x 1440: this is a popular resolution for curved ultra-wide gaming monitors. However, these monitors are usually advertised as having a 21:9 aspect ratio instead, so that the numbers involved are smaller. (I suppose it's possible that the pixels actually are not square, but somehow I doubt it.)

The 12:5 aspect ratio is often lumped together with the 21:9 aspect ratio, as they are quite close together - 12:5 can be expressed as 21.6 to 9, and as what is sold as 21:9 is actually 43:18, which is 21.5 to 9, that is clearly the case.

And, incidentally, 2.35 to 1 is 21.15 to 9, and 2.37 to 1 is 21.33 to 9, so these ratios are just slightly wider than CinemaScope, the widest movie theatre experience.

Copyright (c) 2021 John J. G. Savard